Value iteration is a fundamental algorithm in the field of Reinforcement learning(RL) used to find the optimal policy for a Markov Decision Process (MDP). It iteratively updates the value of each state by considering all possible actions and states, progressively refining its estimates until it converges to the optimal solution. This allows an agent to find the best strategy to maximize its cumulative reward over time.

Understanding Markov Decision Processes (MDPs)

Before moving to value iteration algorithm, it's important to understand the basics of Markov Decision Processes which is defined by:

- States (S): A set of all possible situations in the environment.

- Actions (A): A set of actions that an agent can take.

- Transition Model (P): The probability

P(s′∣s, a) of transitioning from states to states′ after taking actiona . - Reward Function (R): The immediate reward received after transitioning from state

s to states′ due to actiona . - Discount Factor (

γ ): A factor between 0 and 1 that discounts future rewards.

The goal of an MDP is to find an optimal policy

Key Steps of the Value Iteration Algorithm

1. Initialization

Start by initializing the value function

2. Value Update

Iteratively update the value function using the Bellman equation:

V_{k+1}(s) = \max_{a \in A} \sum_{s'} P(s'|s,a) \left[ R(s,a,s') + \gamma V_k(s') \right]

This equation calculates the expected cumulative reward for taking action

3. Convergence Check

Continue the iteration until the value function converges i.e the change in the value function between iterations is smaller than a predefined threshold

4. Extracting the Optimal Policy

Once the value function has converged, the optimal policy

\pi^*(s) = \arg\max_{a \in A} \sum_{s'} P(s'|s,a) \left[ R(s,a,s') + \gamma V^*(s') \right]

Example: Simple MDP Setup

Let’s implement the Value Iteration algorithm using a simple MDP with three states:

1. Transition Model P(s′∣s,a) :P(s_2|s_1, a_1) = 1

P(s_3|s_1, a_2) = 1

P(s_1|s_2, a_1) = 1

P(s_3|s_2, a_2) = 1

P(s_1|s_3, a_1) = 1

P(s_2|s_3, a_2) = 1

2. Reward Function

R(s_1, a_1, s_2) = 10 R(s_1, a_2, s_3) = 5 R(s_2, a_1, s_1) = 7 R(s_2, a_2, s_3) = 3 R(s_3, a_1, s_1) = 4 R(s_3, a_2, s_2) = 8

Using the value iteration algorithm, we can find the optimal policy and value function for this MDP.

Implementation of the Value Iteration Algorithm

Now, let’s implement the Value Iteration algorithm in Python.

Step 1: Define the MDP Components

In this step, we will be using Numpy library and we define the states, actions and the transition model and reward function that govern the system.

import numpy as np

states = [0, 1, 2]

actions = [0, 1]

def transition_model(s, a, s_next):

if (s == 0 and a == 0 and s_next == 1):

return 1

elif (s == 0 and a == 1 and s_next == 2):

return 1

elif (s == 1 and a == 0 and s_next == 0):

return 1

elif (s == 1 and a == 1 and s_next == 2):

return 1

elif (s == 2 and a == 0 and s_next == 0):

return 1

elif (s == 2 and a == 1 and s_next == 1):

return 1

return 0

def reward_function(s, a, s_next):

if s == 0 and a == 0 and s_next == 1:

return 10

elif s == 0 and a == 1 and s_next == 2:

return 5

elif s == 1 and a == 0 and s_next == 0:

return 7

elif s == 1 and a == 1 and s_next == 2:

return 3

elif s == 2 and a == 0 and s_next == 0:

return 4

elif s == 2 and a == 1 and s_next == 1:

return 8

return 0

gamma = 0.9

epsilon = 0.01

Step 2: Value Iteration Process

Here we implement the Value Iteration process that iteratively updates the value of each state until convergence.

def value_iteration(states, actions, transition_model, reward_function, gamma, epsilon):

V = {s: 0 for s in states}

while True:

delta = 0

for s in states:

v = V[s]

V[s] = max(sum(transition_model(s, a, s_next) *

(reward_function(s, a, s_next) + gamma * V[s_next])

for s_next in states) for a in actions)

delta = max(delta, abs(v - V[s]))

if delta < epsilon:

break

policy = {}

for s in states:

policy[s] = max(actions,

key=lambda a: sum(

transition_model(s, a, s_next) *

(reward_function(

s, a, s_next) + gamma * V[s_next])

for s_next in states))

return policy, V

Step 3: Running the Algorithm

Now we call the value_iteration function with the defined parameters and display the results (optimal policy and value function).

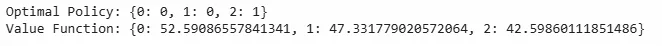

policy, value_function = value_iteration(states, actions, transition_model, reward_function, gamma, epsilon)

print("Optimal Policy:", policy)

print("Value Function:", value_function)

Output:

Applications of Value Iteration

Value iteration is used in various applications like:

- Robotics: For path planning and decision-making in uncertain environments in dynamic games.

- Game Development: For creating intelligent agents that can make optimal decisions.

- Finance: For optimizing investment strategies and managing portfolios.

- Operations Research: For solving complex decision-making problems in logistics and supply chain management.

- Healthcare: For optimizing treatment plans and balancing short-term costs with long-term health outcomes.

By mastering Value Iteration, we can solve complex decision-making problems in dynamic, uncertain environments and apply it to real-world challenges across various domains.